|

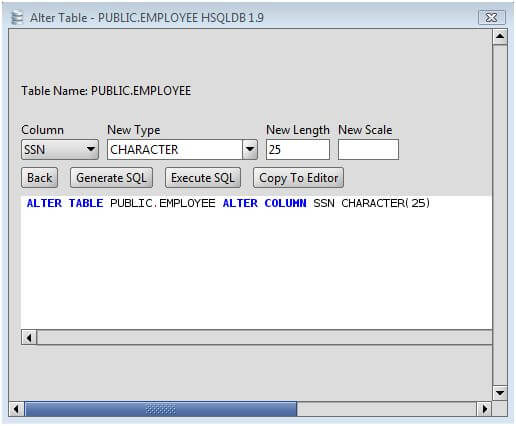

10/5/2023 0 Comments Redshift alter table column sizeCheck if the old values fit on the new size (Select max(len(field)) from schema.It includes another step, but would do the same, when possible when reducing field size: This is a notably better approach on Redshift because of the overhead it generates and the time spent later to run VACUUM on a big table with an update on all rows. Instead of creating a new field, copying the data, renaming, and dropping the old column. But you can specify extracopyoptions which is a list of extra options to append to the AWS Redshift COPY command when loading data, e.g. This connector is used to automatically trigger the appropriate COPY and UNLOAD commands on AWS Redshift. Describe alternatives you've consideredĪLTER TABLE schema.table ALTER COLUMN field TYPE varchar(1024) The spark-redshift connector uses the redshift-jdbc connector under the hood.

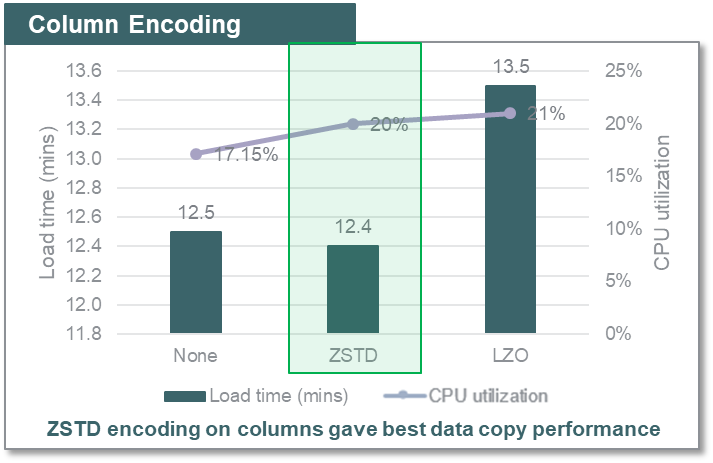

When there is a change in a field type and the new field size is "bigger" than the previous, DBT should ALTER the column not recreate on a new column. I am requesting a straightforward extension of existing dbt-redshift functionality, rather than a Big Idea better suited to a discussion 1.Alter table add newcolumn to the table 2.Update the newcolumn value with oldcolumn value 3.Alter table to drop the oldcolumn 4.Compression conserves storage space and reduces the size of data that is read from storage, which reduces the amount of disk I/O and therefore improves query performance. I have searched the existing issues, and I could not find an existing issue for this feature Database Developer Guide Working with column compression PDF RSS Compression is a column-level operation that reduces the size of data when it is stored.

I have read the expectations for open source contributors.I have tried writing the Alter Table script before the Select lines but it did not work too.Is this your first time submitting a feature request? Check that the server is running and that you have access privileges to the requested database NOTE: The view can be made up of 1 or more than 1 tables, so you need to change the column size of all those base tables. 3- Now Change the column size of that base table.

If PGTABLEDEF does not return the expected results, verify that the searchpath parameter is set correctly to include the relevant schemas. Unable to connect to the Amazon Redshift server '.0.sq.com.sg'. 2- Above query will you a table where you will find the base table under the column name REFERENCEDNAME. PGTABLEDEF only returns information about tables that are visible to the user. This TYPE clause in ALTER statement is used to update the length of the column of VARCHAR type in Redshift. SELECT TRIM (name) as tablename, TRIM (pgattribute. Create a new Table-B that contains the new columns, plus an identity column (eg customerid) that matches Table-A. To ALTER or change the length/size of a column in Amazon AWS Redshift use the ALTER COLUMN columnname TYPE clause in ALTER TABLE SQL statement. What it does is that it counts the number of data blocks, where each block uses 1 MB, grouped by table and column. We identified an issue with the feature in very specific. (30) Error occurred while trying to execute a query: ERROR: syntax error at or near "TABLE" LINE 17: ALTER TABLE bmd_disruption_fv ^ This query will give you the size (MB) of each column. We have temporarily disabled the ALTER column VARCHAR size functionality. These joins without a join condition result in the Cartesian product of two tables. Don’t use cross-joins unless absolutely necessary. to perform complex aggregations instead of selecting from the same table multiple times. Include only the columns you specifically need. I have tried changing the column data type in Redshift via SQL. Query performance guidelines: Avoid using select.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed